Recent Improvements (May 2016)

Creators:

Tom Elliott

Copyright © The Contributors. Sharing and remixing permitted under

terms of the Creative Commons Attribution 3.0 License (cc-by).

Last modified

Jun 03, 2016 05:01 PM

On May 13th, 2016, the Pleiades 3 development team

deployed a package of upgrades to the Pleiades website. This blog post

summarizes the changes.

The primary concern that motivated the Institute for the Study of the Ancient World at New York University to apply for a Digital Humanities Implementation grant for Pleiades from the National Endowment for the Humanities was website speed and reliability. The grant was awarded in August 2015 and work began in earnest in January 2016.

Late on the 13th of May, 2016, software developers from Jazkarta, Inc.,

who are working on the grant under subcontract to NYU, deployed a

series of software upgrades designed primarily to address site

performance. These upgrades were deployed to a new, more capable web

server, provided under contract by long-time Pleiades hosting provider Tummy.com. During the subsequent two weeks, Jazkarta and Tummy personnel have collaborated with members of the Pleiades editorial college to monitor, analyze, and tune these upgrades for optimum performance.

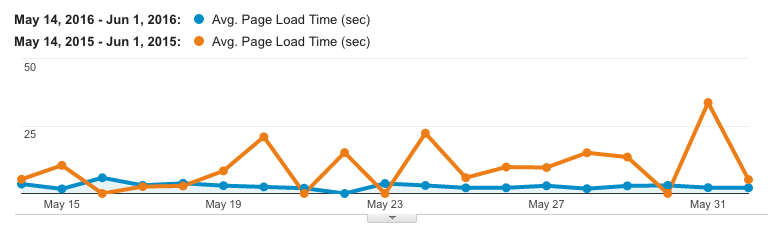

Compared with the same period two years ago, the average download time for Pleiades pages has improved by nearly 75%,

from a painfully-long 12 seconds down to about 3 seconds. The following

plot, generated with Google Analytics, provides a day-by-day visual

comparison.

Google defines average page download time as follows: "the average

amount of time (in seconds) it takes for pages from the sample set to

load, from initiation of the page view (e.g. click on a page link) to

load completion in the browser."

Improved and Reinstated Features

These performance improvements have been achieved even though we have re-enabled or upgraded several features

that were aging, had failed, or had been disabled previously in order

to combat periodic site slow downs and failures. These include:

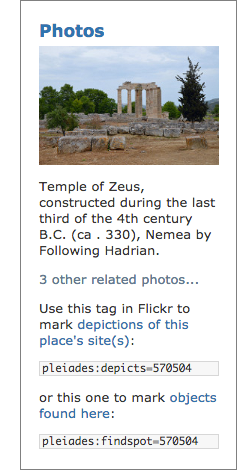

The Flickr "Photos" Portlet

The Pleiades Flickr Photos Portlet once again appears on each place page. It automatically queries the Flickr photo-sharing website for images that have been "machine tagged" using Pleiades identifiers.

The portlet constructs a link to all such images. Moreover, if the

editorial college has identified one of these images as a "place

portrait image" by adding it to the Pleiades Places Group on Flickr, that image is displayed in the portlet. The portlet was updated to work with the latest version of Flickr's Application Programming Interface (API).

The Pleiades Flickr Photos Portlet once again appears on each place page. It automatically queries the Flickr photo-sharing website for images that have been "machine tagged" using Pleiades identifiers.

The portlet constructs a link to all such images. Moreover, if the

editorial college has identified one of these images as a "place

portrait image" by adding it to the Pleiades Places Group on Flickr, that image is displayed in the portlet. The portlet was updated to work with the latest version of Flickr's Application Programming Interface (API).

The screen capture at right shows the Flickr Photos Portlet as it appears on the Pleiades place page for ancient Nemea in Greece. The featured portrait photo was taken and published on Flickr under open license by Carole Raddato (a.k.a. Following Hadrian).

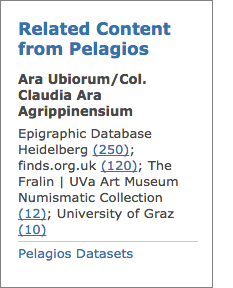

The Pelagios "Related Content" Portlet

The collaborative Pelagios Commons project (funded by the Andrew W. Mellon Foundation) has been a long-time Pleiades partner. Through its Peripleo website, the Pelagios community collates and maps open data about the ancient world that has been published online by over twenty (and growing) academic projects, museums, libraries, and archives. Following procedures laid down by the Pelagios team,

these data providers include in their publications information about

the relationships between ancient places (as represented in Pleiades)

and the artifacts, art works, texts, historical persons, and other

objects of interest registered, described, or depicted in their data.

The collaborative Pelagios Commons project (funded by the Andrew W. Mellon Foundation) has been a long-time Pleiades partner. Through its Peripleo website, the Pelagios community collates and maps open data about the ancient world that has been published online by over twenty (and growing) academic projects, museums, libraries, and archives. Following procedures laid down by the Pelagios team,

these data providers include in their publications information about

the relationships between ancient places (as represented in Pleiades)

and the artifacts, art works, texts, historical persons, and other

objects of interest registered, described, or depicted in their data.

The Pleiades Pelagios Releated Content Portlet appears on each place page (screen shot at left from the Pleiades place page for ancient Ara Ubiorum, modern Cologne). It automatically queries the Pelagios Peripleo website for related records in other databases and returns a summary of matching records. The portlet creates links to a Peripleo query for each matching dataset. The portlet has been updated to work with the latest version of the Peripleo API.

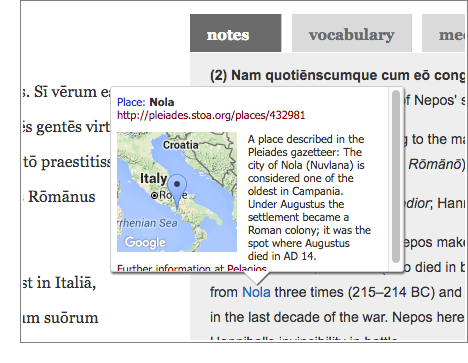

Dickinson College Commentaries (DCC) and the AWLD.js

The Dickinson College Commentaries (and several other websites) make use of the Ancient World Linked Data JavaScript Library (AWLD.js) to enhance links to Pleiades

(and other data sources) with informative pop-up windows as illustrated

in the screen capture at right. AWLD.js creates these pop-ups in each

user's browser by scanning the current page for links to select websites

(like Pleiades). It then pre-fetches information from those websites -- in Pleiades' case, it requests the GeoJSON serialization for each place link -- and uses the contents to prepare the contents of the pop-ups.

The Dickinson College Commentaries (and several other websites) make use of the Ancient World Linked Data JavaScript Library (AWLD.js) to enhance links to Pleiades

(and other data sources) with informative pop-up windows as illustrated

in the screen capture at right. AWLD.js creates these pop-ups in each

user's browser by scanning the current page for links to select websites

(like Pleiades). It then pre-fetches information from those websites -- in Pleiades' case, it requests the GeoJSON serialization for each place link -- and uses the contents to prepare the contents of the pop-ups.

Prior to the most recent upgrade, the Pleiades website was

vulnerable to performance degradation when receiving multiple page (or

JSON serialization) requests in a short amount of time. Consequently, a

web page with several Pleiades links and AWLD.js (especially

when visited by multiple users at one time, as might happen during a

class session) could significantly slow or even disable Pleiades

for a period of time. To mitigate such effects, we had configured our

server to block large numbers of requests coming from a particular

external page. This meant that users of sites like DCC often got

incomplete pop-ups or even non-working links. The upgraded website is no

longer vulnerable to these effects, and so we have updated the server

configuration to ensure the best possible user experience for our

AWLD.js friends.

Bots, Spiders, and Crawlers

Requests for Pleiades resources don't just come from browsers. A variety of automated agents (often called bots, spiders, or crawlers) gather information from websites for all manner of purposes. The major search engines (e.g., Google, Bing, Baidu),

as well as many minor ones, employ bots to maintain and update the

digital indices of web content that underpin the services they provide.

The Internet Archive operates a bot that retrieves web pages for inclusion in the Wayback Machine. Some Pleiades and Pelagios partner projects use bots to gather, check, or update local copies of Pleiades

data for project use. Still other bots serve commercial data-mining

purposes, or academic or student research projects in computer and

information science. And there are bots that serve more nefarious

purposes, scanning servers and software for security vulnerabilities

that can be exploited or hacked. Bots that make many rapid-fire

requests, that ignore usage guidelines set forth in the Pleiades robots.txt file, or that happen to visit concurrently with other bots have all caused performance problems for Pleiades in the past.

The same, old traffic-limiting factors described in the preceding

section were also designed to reduce the impact of bots. These

restrictions have now been removed. The upgraded site is now serving the

Google, Bing, and Archive.org bots -- as well as all other third-party

bots without a history of misbehavior -- at full speed and without

restriction.

Next Steps

Another key aspect of the upgrade was the installation of tools to perform "software analytics" on the Pleiades website. This new feature, which has been implemented with the commercial New Relic service. It provides Pleiades software developers and managing editors with performance metrics gathered from within each component of the Pleiades

web application. The resulting insight enables us to tune performance

in specific areas and to target code components for additional,

performance-enhancing upgrade or replacement. We will continue in coming

weeks to monitor site performance, using New Relic and Google

Analytics, to seek additional improvements.

We are also undertaking a number of feature enhancements, as outlined

in the original proposal. At present, planning and coding work is

underway on upgrades to our:

Updates will be announced on this blog.

Technical Specifics

Major changes incorporated in the recent upgrade include:

- Moved to a new dedicated host, running Ubuntu 16.04 LTS, with more memory and faster processors

- Upgraded the web application framework and content management system from Plone 3 to Plone 4.3

- Bug fixes in core code for speed, lower memory footprint, and more efficient use of the Zope catalog and database

- More efficient population of pages, maps, and editors' dashboards and review lists

- More efficient creation of alternate serializations of content and export "dumps" via the downloads page

- Removed under- and un-used code components and plugins

- Eliminated LDAP for user account management

- Fixes to icons, map symbols, and vocabulary displays

- Scripted deployment with Ansible

Users are invited to follow progress, report problems, request features via the Pleiades gazetteer issue tracker on Github.

The Pleiades Flickr Photos Portlet once again appears on each place page. It automatically queries the Flickr photo-sharing website for images that have been "machine tagged" using Pleiades identifiers. The portlet constructs a link to all such images. Moreover, if the editorial college has identified one of these images as a "place portrait image" by adding it to the Pleiades Places Group on Flickr, that image is displayed in the portlet. The portlet was updated to work with the latest version of Flickr's Application Programming Interface (API).

The collaborative Pelagios Commons project (funded by the Andrew W. Mellon Foundation) has been a long-time Pleiades partner. Through its Peripleo website, the Pelagios community collates and maps open data about the ancient world that has been published online by over twenty (and growing) academic projects, museums, libraries, and archives. Following procedures laid down by the Pelagios team, these data providers include in their publications information about the relationships between ancient places (as represented in Pleiades) and the artifacts, art works, texts, historical persons, and other objects of interest registered, described, or depicted in their data.

The Dickinson College Commentaries (and several other websites) make use of the Ancient World Linked Data JavaScript Library (AWLD.js) to enhance links to Pleiades (and other data sources) with informative pop-up windows as illustrated in the screen capture at right. AWLD.js creates these pop-ups in each user's browser by scanning the current page for links to select websites (like Pleiades). It then pre-fetches information from those websites -- in Pleiades' case, it requests the GeoJSON serialization for each place link -- and uses the contents to prepare the contents of the pop-ups.

Stumble It!

Stumble It!

No comments:

Post a Comment